In a bold move that emphasizes the importance of collaboration and community in technological advancements, Nvidia has taken a significant step by open-sourcing elements of the Run:ai platform, particularly the KAI Scheduler. This Kubernetes-native GPU scheduling solution, now under the Apache 2.0 license, represents Nvidia’s strategy to foster both open-source initiatives and robust enterprise AI infrastructures. Sigma play by Nvidia, this new avenue invites developers, engineers, and researchers to engage with the platform, share feedback, and drive innovation—a clear message that the company values the collective input of the tech community.

The KAI Scheduler’s open-source model allows a wider audience to appreciate its capabilities and to contribute to its evolution. As part of the NVIDIA Run:ai platform, it serves not just the enterprise but the broader spectrum of the AI development community, bridging a critical gap between proprietary solutions and the open-source ecosystem. The implications of this move could be far-reaching, potentially accelerating the pace of advancements in GPU scheduling technology.

Addressing Resource Management Challenges

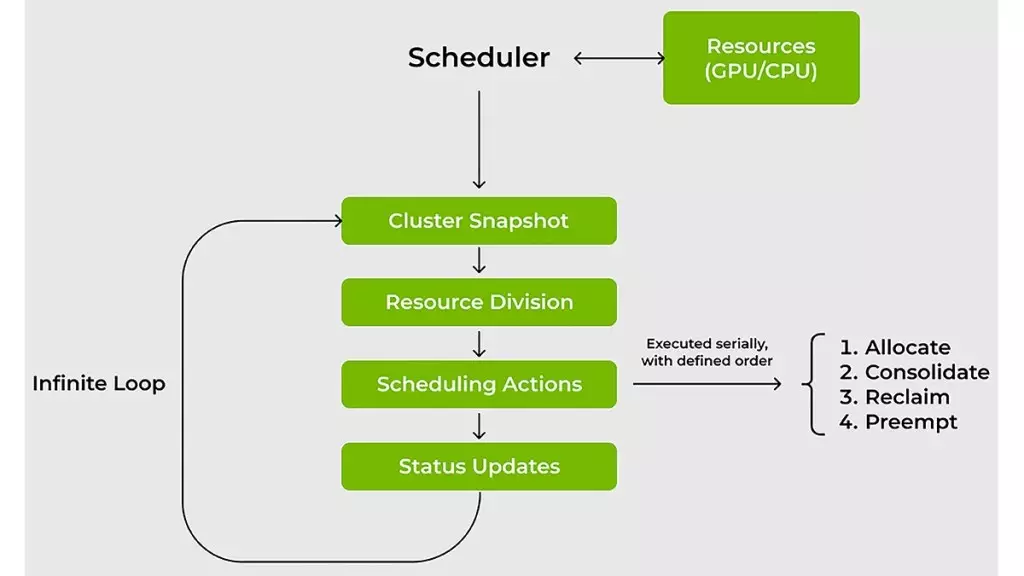

One of the core challenges facing machine learning (ML) and IT teams revolves around efficiently managing AI workloads across GPUs and CPUs. Traditional resource schedulers often falter when confronted with the dynamic and unpredictable demands of AI applications. It’s this very gap that the KAI Scheduler seeks to bridge. By specifically designing a solution that responds to fluctuating GPU demands and reduces wait times for compute access, Nvidia aims to streamline operations in environments where rapid changes in resource requirements are the norm.

The technological brilliance behind KAI Scheduler is its capacity for real-time recalculation of resource allocation. By maintaining constant vigilance over workload demands, the scheduler ensures that GPU resources are matched adequately without requiring continuous oversight from administrators. In an operational landscape where every second counts and GPU availability can dictate the success of training processes, this automation presents a significant efficiency upgrade.

The Significance of Dynamic Scheduling

Time is a crucial commodity for machine learning engineers. The KAI Scheduler reduces cumbersome wait times through a combination of innovative scheduling tactics, such as gang scheduling, GPU sharing, and hierarchical queuing. These features enable teams to submit tasks in batches, allowing them to focus on other critical aspects of their projects. By simplifying task submissions and ensuring prompt initiation of processes when resources are available, the KAI Scheduler enhances productivity while maintaining a fair allocation framework.

This dynamic approach not only addresses individual project needs but also considers the overall health of shared computational resources. By implementing sophisticated strategies like bin-packing and workload spreading, the KAI Scheduler maximizes compute utilization and minimizes resource fragmentation. This careful optimization of resources fosters an efficient computational environment, crucial for teams that rely heavily on shared clusters. Nvidia’s foresight is evident in their design, as they directly confront the inefficiencies created by poor resource management practices that can arise in collaborative settings.

Streamlining Complexity in AI Frameworks

Beyond the intricacies of resource allocation, the integration of various AI frameworks often adds layers of complexity to project workflows. Traditionally, linking workloads to tools like Kubeflow, Ray, or Argo necessitates meticulous manual configurations that can slow down development cycles. Here again, KAI Scheduler emerges as a powerful ally. Featuring a built-in pod grouper, it simplifies these connections, automatically detecting and aligning with prevalent AI tools, thus reducing configuration headaches.

By lowering the barrier to entry for integrating different systems, Nvidia’s solution empowers teams to focus on the creative and impactful aspects of their work instead of navigating a labyrinth of settings and configurations. The result is faster prototyping, shorter development timelines, and an environment where innovation can flourish unhindered by administrative bottlenecks.

As AI continues to evolve, solutions like the KAI Scheduler stand as a testament to the importance of agility and adaptability in tech infrastructure. Nvidia’s strategic leap into open-source, combined with the operational efficiencies introduced by their scheduling technology, signifies not just a step forward for Nvidia but a potential turning point for AI development as a whole.

Leave a Reply